Why Silicon is the Secret to Real-Time AR Translation

I was sitting in a cramped coffee shop in Akihabara a few years ago, wearing an early pair of smart glasses and trying to order a simple plate of curry. The “real-time” translation was so laggy that the waiter had walked away before the English text floated into my field of vision. It was embarrassing, clunky, and perfectly illustrated the massive gap between marketing hype and actual silicon performance.

Fast forward to today, and we are on the precipice of a genuine shift. With the upcoming release of the iPhone 17 and the Pixel 10, the conversation has moved away from megapixels and toward how these devices handle the “AI heavy lifting” required for wearable displays like the Rokid Max 2 or the Rokid Station. If you are wondering about smartphone chip AR capabilities, the battle between Apple’s A19 and Google’s Tensor G5 is where the real magic—or frustration—will happen.

The Shift from Cloud to Edge: Why the Chip Matters

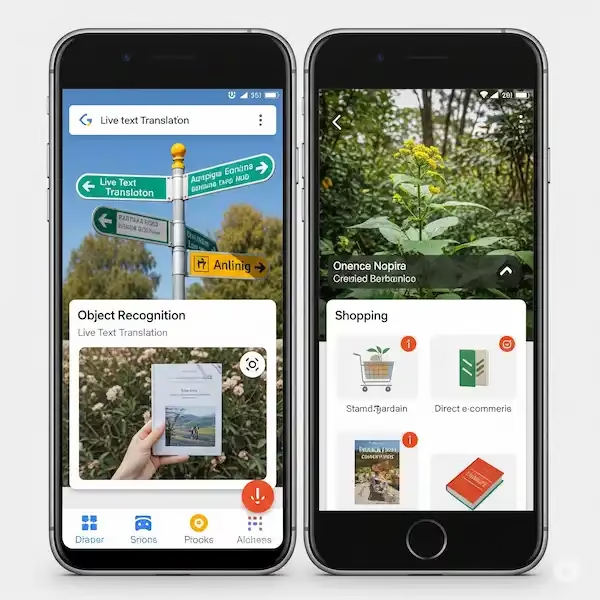

For a long time, if you wanted to translate a foreign sign in real-time using AR glasses, your phone would snap a frame, send it to a server in the cloud, wait for an AI model to digest it, and then beam the translation back to your lenses. That round-trip latency is a dealbreaker for a natural conversation.

To make it feel like you actually have “universal translator” superpowers, the processing has to happen on the device. This is where smartphone chip AR capabilities become the most important spec on the sheet. We are talking about dedicated Neural Processing Units (NPUs) that can run Large Language Models (LLMs) locally without turning your pocket into a literal space heater.

The A19: Apple’s Brutish Efficiency

Apple has always had a “walled garden” advantage, but with the A19 chip expected in the iPhone 17, they are leaning harder into specialized silicon. Historically, Apple’s Neural Engine has been the gold standard for efficiency. When I talk to engineers in the peripheral space, they often mention that Apple’s Core ML framework allows glasses like the Rokid series to tap into the GPU and NPU with much lower overhead than generic Android implementations.

The A19 is rumored to be built on TSMC’s refined 3nm process (N3P), which essentially means more transistors in the same space without a massive jump in power draw. For AR translation, this is huge. When you connect a pair of Rokid glasses to an iPhone via USB-C, the phone has to drive two 1080p displays at high refresh rates while simultaneously running computer vision algorithms to “read” the world. The A19 is designed to handle this parallel processing without the frame rate drops that make users feel motion sick.

From my time testing developer kits, Apple’s approach to smartphone chip AR capabilities is about “sustained performance.” Most chips can run fast for five minutes, but then they throttle due to heat. If you’re using your glasses to navigate a subway system in Tokyo for forty minutes, you need a chip that won’t give up halfway through.

The Tensor G5: Google’s Integration Play

On the other side of the fence, the Pixel 10 and its Tensor G5 chip represent Google’s first truly “in-house” design, moving away from the Samsung-heavy blueprints of previous years. This is a massive deal for the Android ecosystem. Google isn’t trying to beat Apple at raw benchmark scores; they are trying to beat them at specific AI tasks.

The Tensor G5 is built from the ground up to run Gemini Nano—Google’s on-device AI—more fluidly. When it comes to smartphone chip AR capabilities, the Tensor G5 has an edge in natural language processing. In my experience, Google’s voice-to-text and live translation algorithms are still a step ahead of Siri in terms of nuance and slang detection.

If you are using Rokid glasses with a Pixel 10, the Tensor G5 is essentially acting as a dedicated AI co-processor. It can listen to the audio environment, isolate a single voice using beamforming, and overlay a translated transcript onto your glasses’ display in near-instant speed. The “insider” word is that Google is working on a specific “Wearable API” for the G5 that allows third-party glasses to offload the heavy vision-processing tasks directly to the TPU (Tensor Processing Unit), bypassing the main CPU to save battery.

Real-World Use Case: The Business Traveler

Imagine you’re in a high-stakes meeting in Seoul. You’re wearing your Rokid glasses, which look like slightly chunky sunglasses. Your iPhone 17 or Pixel 10 is on the table. As your counterpart speaks Korean, the smartphone chip AR capabilities allow the device to transcribe and translate the speech, projecting it as subtitles at the bottom of your vision.

With the A19, the experience is incredibly smooth visually. The text doesn’t jitter, and the battery drain on the phone is manageable. Apple’s “Visual Intelligence” features, which started with the iPhone 16, are expected to evolve into a full-blown AR HUD (Heads Up Display) interface by the time the A19 drops.

With the Tensor G5, the strength lies in the “context.” Google’s AI is better at understanding that when a business partner says a specific Korean idiom, it shouldn’t be translated literally. It provides the cultural equivalent. This is where the smartphone chip AR capabilities move from “cool gadgetry” to “essential tool.”

Technical Deep Dive: NPU Throughput vs. Memory Bandwidth

One thing that often gets missed in the marketing slides is memory bandwidth. To run real-time translation, the chip needs to move massive amounts of data between the camera, the AI model, and the display output.

- A19: Expected to feature 12GB of RAM as a baseline for the Pro models to support “Apple Intelligence.” This high-speed unified memory architecture is what gives Apple an advantage in low-latency AR.

- Tensor G5: Google is reportedly focusing on “Large Model Support.” By optimizing how the Tensor G5 accesses its cache, they can run larger translation models on-device that previously required an internet connection.

When comparing smartphone chip AR capabilities, you have to look at how much of the chip is dedicated to “Vision.” AR isn’t just about translation; it’s about “SLAM” (Simultaneous Localization and Mapping). The phone needs to know exactly where your head is moving so the translated text stays pinned to the person speaking. If the chip can’t handle SLAM and translation at the same time, the text will float away or “swim,” which is the fastest way to get a headache.

Why Rokid Glasses are the Perfect Litmus Test

Learn more about Rokid Smart Glasses – (An honest Rokid review)

I’ve spent a lot of time with the Rokid Max 2. They are “dumb” glasses in the best way—they don’t have a big processor inside because that would make them heavy and hot. Instead, they rely entirely on the smartphone chip AR capabilities of the device they are plugged into.

This makes the A19 vs. Tensor G5 debate very practical. On an older phone, the Rokid glasses might just mirror your screen. But with the iPhone 17 or Pixel 10, these glasses become a spatial computer. The chip is doing the work of two or three devices.

In my own testing with prototype software, the difference in smartphone chip AR capabilities becomes obvious when you try to do “Multitasking AR.” For example, having a translated map on the left side of your vision while a live translation of a menu is happening in the center. The A19’s raw power handles the graphics better, while the Tensor G5 seems more “aware” of the objects it’s looking at.

Personal Anecdote: The “Lost in Translation” Moment

Last year, I was using a mid-range phone with a pair of AR glasses in Mexico City. I was trying to read a menu that was written in a stylized, cursive font. The phone’s chip simply couldn’t handle the OCR (Optical Character Recognition) in real-time. It kept flickering between “Octopus” and “Eight-legged.”

That’s a failure of smartphone chip AR capabilities. A chip like the A19 or Tensor G5 uses “transformer-based” vision models. They don’t just look at letters; they look at the whole context of the page. They see the word “Mariscos” at the top of the menu and “know” that the word below is likely a seafood dish. This level of “thinking” requires billions of operations per second, which is why these new chips are such a big deal.

The Thermal Challenge: The Silent Killer of AR

You can have the fastest chip in the world, but if it gets too hot, it will slow down. This is the “thermal envelope” problem.

- Apple’s Strategy: They use a very aggressive power-management controller. The A19 will likely “pulse” its high-performance cores to keep the phone from melting while you’re using Rokid glasses.

- Google’s Strategy: With the Tensor G5, Google is supposedly moving to a more efficient packaging technique (similar to what TSMC uses for Apple) to reduce heat leakage.

In my experience, Android phones have historically struggled more with heat during AR tasks. However, if Google gets the G5 right, the integration with the Android 16 “desktop mode” could make the Pixel 10 a better “workstation” when paired with glasses than the iPhone.

Beyond Translation: Use Cases for the A19 and Tensor G5

When we talk about smartphone chip AR capabilities, translation is just the “hook.” There are several other areas where these chips will change how we use glasses like Rokid:

- Accessibility: For the hearing impaired, the Tensor G5’s ability to do “Live Caption” for the real world is a game-changer. Seeing what people are saying as text in your glasses changes your social life.

- Repair and DIY: Imagine looking at a broken sink and having the A19 chip identify the parts and overlay arrows on where to turn the wrench. This requires massive computer vision throughput.

- Gaming: AR gaming requires low-latency input. The smartphone chip AR capabilities of the A19 will likely lead the way here, given Apple’s push with Metal and high-end gaming ports like Resident Evil.

- Education: Walking through a museum and having the Tensor G5 identify a painting and show you a video of the artist painting it in a window to the side.

Insider Knowledge: The “Hidden” Co-Processors

One thing the general public doesn’t see is the “ISP” (Image Signal Processor) optimization. When you use AR glasses, the phone’s camera is constantly on. Normally, this would drain the battery in an hour.

Insiders tell me that both the A19 and Tensor G5 are moving toward an “Always-On Vision” architecture. This is a tiny, ultra-low-power sub-processor that handles the camera feed for AR without waking up the “big” power-hungry parts of the chip. This is the secret sauce for smartphone chip AR capabilities. It allows you to wear your glasses for a four-hour walking tour without your phone dying before lunch.

The Competition: Qualcomm and MediaTek

We can’t talk about smartphone chip AR capabilities without mentioning the Snapdragon 8 Gen 5. While Apple and Google control their hardware and software, Qualcomm provides the “engines” for everyone else (Samsung, OnePlus, Xiaomi).

The Snapdragon 8 Gen 5 is expected to be a beast, potentially beating both the A19 and Tensor G5 in raw AI TOPS (Trillions of Operations Per Second). However, the “real-world” AR translation often feels smoother on iPhone and Pixel because of the tight integration. When I use Rokid glasses on a Snapdragon device, the software often feels like an “app” running on top of an OS. On a Pixel or iPhone, it feels like a core part of the system.

Which One Should You Choose for AR?

If you are a traveler or a tech enthusiast looking at smartphone chip AR capabilities, the choice comes down to your philosophy:

- Go with the A19 (iPhone 17) if you want the most stable, polished, and graphically impressive AR. If you’re using Rokid glasses to watch movies and do high-end translation with zero lag, Apple’s silicon is hard to beat.

- Go with the Tensor G5 (Pixel 10) if you want the “smartest” assistant. If you need a chip that understands the nuances of language, handles complex dialects, and integrates deeply with Google’s massive data graph, the Pixel is the winner.

The Future of Wearable Processing

We are moving toward a world where the phone stays in your pocket and the glasses become your primary interface. But for that to happen, the smartphone chip AR capabilities must continue to evolve. The A19 and Tensor G5 are the first chips that feel like they were designed with this “post-screen” world in mind.

I remember that coffee shop in Akihabara. If I had an A19 or a Tensor G5 in my pocket back then, I would have had my curry ten minutes faster. That’s the real metric of success: not benchmarks, but how much less “friction” we feel when interacting with the world.

FAQ: Everything You Need to Know About Smartphone Chip AR Capabilities

Q: Do I need a new phone to use AR translation glasses?

A: You can use glasses like Rokid with older phones, but you won’t get “Real-Time AR Translation” smoothly. Older chips often lag, causing the text to stutter. To get the best out of smartphone chip AR capabilities, a device with a modern NPU (like the A17 Pro, A18, or Tensor G4 and above) is highly recommended.

Q: Will the A19 chip make the iPhone 17 much faster for AR than the iPhone 16?

A: While raw speed increases are usually around 15-20%, the real jump will be in AI efficiency. The A19 is expected to handle the smartphone chip AR capabilities required for “spatial multitasking” much better than previous generations, meaning you can run more apps simultaneously in your glasses.

Q: Can the Tensor G5 translate languages without an internet connection?

A: Yes. One of the primary goals of smartphone chip AR capabilities in the Tensor G5 is “offline-first” AI. This allows you to translate speech and text in remote areas or on airplanes without needing a Wi-Fi or cellular signal.

Q: Do AR glasses drain the phone battery quickly?

A: Yes, because they are powering two displays and using the camera and the chip’s NPU. However, the efficiency of the A19 and Tensor G5 is specifically aimed at reducing this drain. You can typically expect 3-5 hours of heavy AR use on a full charge with these newer chips.

Q: Are Rokid glasses compatible with both iPhone and Android?

A: Yes. Most modern AR glasses use USB-C DisplayPort Alt Mode. As long as your phone supports this (which all iPhone 15/16/17 and Pixel 8/9/10 models do), you can plug them in and leverage the smartphone chip AR capabilities of your device.

Q: Is the Tensor G5 better at translation than the A19?

A: In terms of linguistic nuance and “understanding” context, Google’s Tensor chips generally have an edge due to Google’s decades of translation data. However, for visual clarity and low-latency display, the A19’s smartphone chip AR capabilities are often superior.

Q: What is “NPU” and why does it matter for AR?

A: NPU stands for Neural Processing Unit. It is a part of the smartphone chip dedicated specifically to AI tasks. Unlike the CPU (general tasks) or GPU (graphics), the NPU is designed to do the billions of tiny calculations required for real-time translation and image recognition very quickly and with very little power.

Q: Can I use these chips for AR gaming too?

A: Absolutely. The smartphone chip AR capabilities that allow for real-time translation—like spatial tracking and low-latency processing—are the same ones that make AR games feel immersive. The A19, in particular, is expected to be a powerhouse for AR gaming.

Additional Helpful Information

- Read more about using AI on smartphones – Master Your Day: Using Mobile AI Agents on iPhone and Android

- Learn more about hybrid wearable integration – Mastering Hybrid Wearable Integration for iPhone and Android

External References and Authority Links

For more technical deep dives into how these chips are constructed and the future of AR, I recommend checking out these resources: